December 14, 2023

XetCache: Caching Jupyter Notebook Cells for Performance & Reproducibility

Yucheng Low

My Problem with Notebooks

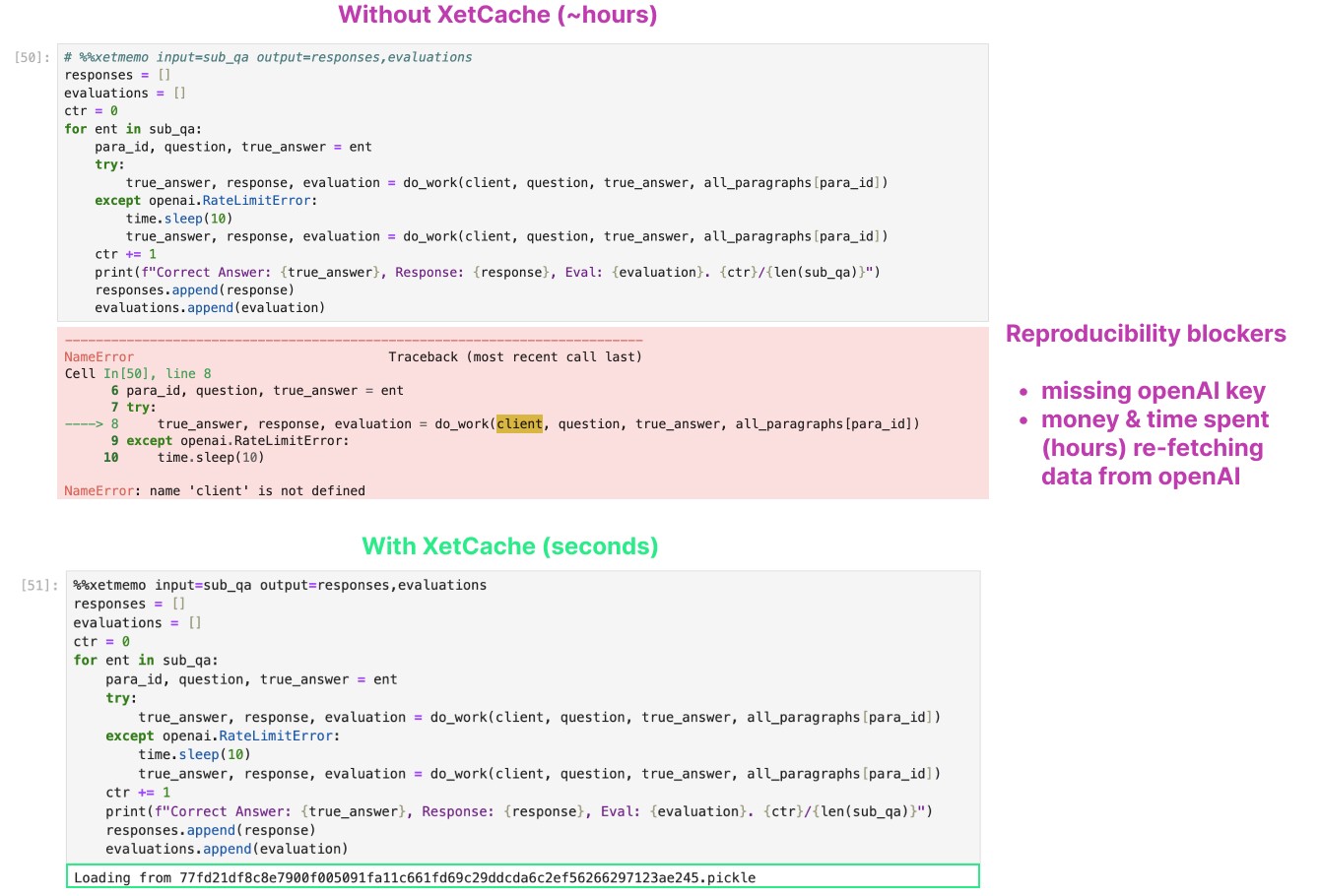

While working on the “You do not need a Vector Database” article, I ran into a very common problem: doing long running tasks in a notebook. It is very annoying to run a cell which queries OpenAI 10,000 times… then accidentally overwrite the output variable.

A common desire for notebooks, is the ability to undo:

It is very easy to accidentally overwrite and modify (expensive) state

The work needed to read and store the state from disk is really ugly.

It is hard to keep all those disk state and cell state in sync: when do you rerun a cell? which file contains what?

Fundamentally, it is hard to organize a notebook so that I can use it both for experimentation, and be able to execute it efficiently straight from top to bottom.

This has always been one of my greatest frustration with working with notebooks.

My Solution

After some experimentation, I think I have a better way. Undo, or rewinding state, is the wrong way to think about the problem. The right way is to enable redo: to make re-running cells inexpensive and allow you to easily maintain notebook top to bottom execution.

The way to do this is simply using memoization, i.e. to cache the outputs of a function so that the next time it is called with the same inputs, we can simply return it from the cache. (Coincidentally, I was just working on Advent of Code 2023 Day 12… where the solution involves memoization too.)

This idea seems to be pretty obvious, but somehow I have not come across a package to do exactly what I need. The two I have came across — ipython-cache and cache-magic — require either explicit filename management or do not take input state into consideration.

So today we are open sourcing the xetcache library, an actual memoization system for data science notebooks.

Using XetCache Locally

All you need to do is run pip install xetcache from your command line and then run import xetcache at the start of your notebook.

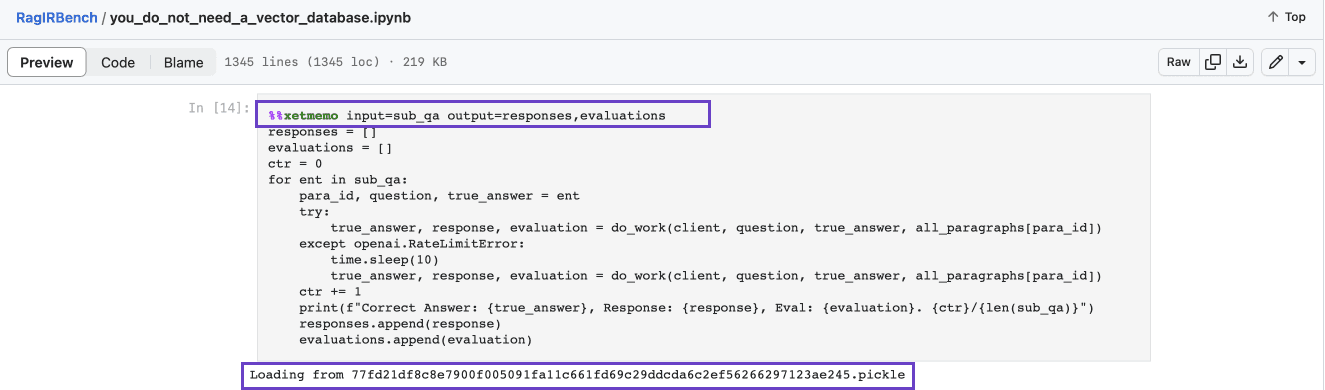

Then in any cell, we can use the xetmemo cell magic, specify the inputs we depend on and the outputs to cache.

And thats it! Future runs of the same cell will automatically load from cache.

The cache will automatically take into consideration both the contents of the input variable as well as the code in the cell. Any changes will trigger re-computation. You can even include other functions (clean_data()) you depend on as part of the input! This way if the function were to get modified, the computation will be re-triggered as well.

You can find lots of examples in the benchmarking notebook I used for my You Don’t Need a Vector Database post. By default, the cache is written to a local xmemo/ folder — cached pickle files are loaded back on re-runs as shown in the screenshot below.

Sharing Cached Notebooks in GitHub

While the xetmemo plugin is useful when working with notebooks locally in single player mode, it’s even more useful when sharing notebooks with others.

This allows everyone else using the repo to share in compute savings, and allow every historical version of notebook to be easily reproducible by anyone. For instance, say your notebook uses OpenAI calls. If the entire cell is memoized, even if you do not have access to OpenAI you can still run the cell and get the result from the cache.

While notebook sharing on GitHub is easy, cache sharing is not since GitHub can’t support larger file sizes. We get around this by using the XetData GitHub app to easily scale to TB-scale GitHub repos. Install it for yourself and just check in the entire xmemo folder to push your shared cache alongside your notebook in GitHub:

XetHub’s data deduplication means that you automatically save storage and bandwidth for similar outputs, which is extremely common when you are using notebooks for experimentation. And most importantly, you can use the lazy clone feature so that you do not even to download the entire cache:

This will only download placeholder (pointer) files for the large cache files, and xetcache will automatically fetch those on demand as they are accessed.

Other Features

XetCache also support a few other usage scenarios such as decorators or function call memoization. Here’s an example of both of these:

Check out the documentation in the XetCache GitHub page for more details.

Help us Improve XetCache

XetCache is currently in alpha and can still be improved significantly:

Recursive tracking of function dependencies

Automatically inference of cell closures

Dependency tracing

Alternate storage format for small values

Faster hashing

Storage of displayed outputs

… and many others!

We think XetCache fills a much needed gap in the notebook experience. If you want to discuss ideas for improvement or want to contribute, please join our Slack and open issues in the GitHub repo.

Share on